Many companies are deploying AI. Very few are structuring it.

What started as lightweight experimentation has quickly turned into operational dependency. Teams use AI to draft content, analyze data, summarize research, generate code, and support customer workflows. In some organizations, AI is already influencing decisions that affect revenue, compliance, and reputation.

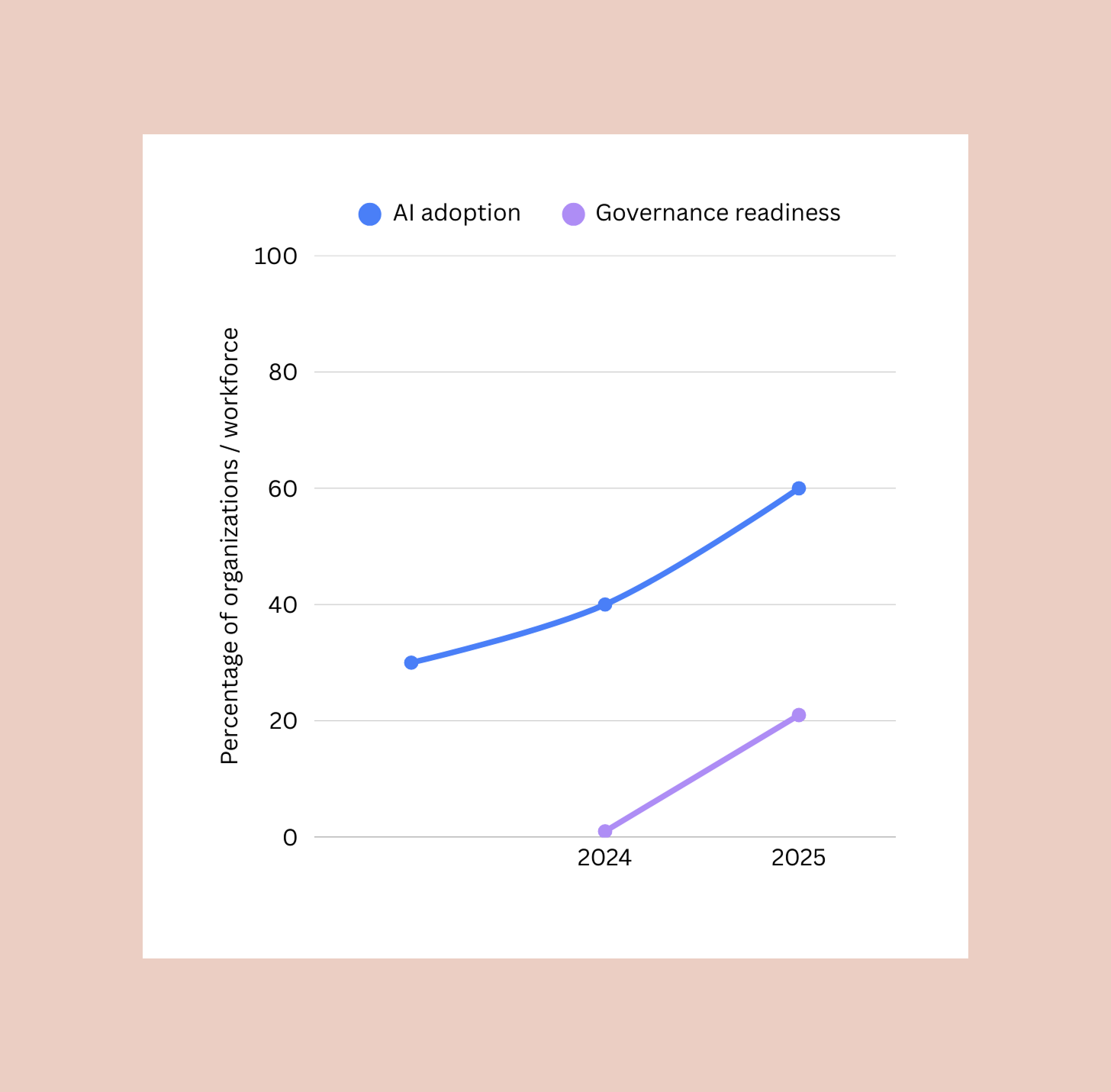

But governance has not kept pace. Most companies treat AI as a productivity layer. They rarely treat it as infrastructure. That gap will not hold for long.

From Assistants to Agents

Early AI tools functioned like enhanced search engines or writing aids. They required direct human prompting and oversight. The risk surface was limited and relatively contained. That is changing.

AI systems are becoming more autonomous. They can retrieve information, execute tasks, interact with external tools, and make decisions across workflows. In some environments, they are beginning to act with limited independence.

When AI becomes operational, the questions shift:

- Who is accountable for its outputs?

- How is bias measured and corrected?

- What happens when a model drifts?

- How is sensitive data protected?

- What controls exist if something goes wrong?

These are not abstract ethical concerns. They are architectural ones.

What AI Governance Really Means

AI governance is often described as a set of policies that ensure AI systems are safe, ethical, and compliant. That definition is technically correct, but incomplete. In practice, governance is a system of controls embedded into how AI is built, deployed, and monitored. It moves beyond principles and into measurable processes.

Many governance frameworks now follow a lifecycle model. The NIST AI Risk Management Framework, for example, describes governance as an ongoing process that includes governing systems, mapping risks, measuring performance, and managing outcomes.

That may sound procedural, but in reality it touches product design, data architecture, security posture, and user experience. Governance is not something you write in a policy document. It is something you build into your systems.

Why This Matters Now

Regulation is accelerating. The European Union’s AI Act will enter more substantial enforcement phases beginning in 2026, with significant penalties for noncompliance. Even for companies based in the United States, global markets and partnerships mean these rules cannot be ignored.

But compliance is only part of the story, while trust is the bigger issue.

Organizations cannot scale AI without internal and external confidence in how it operates. If employees are quietly using unauthorized AI tools, sensitive data may be exposed. If customers cannot understand how AI influences decisions, credibility suffers.

Governance is the mechanism that makes AI usable at scale. Without it, AI remains unpredictable.

Governance Is a UX Problem

This is where the conversation often misses something important. AI governance is not just legal or technical—it is experiential.

If a user cannot tell when AI is involved, that affects transparency. If an AI system produces inconsistent outputs, that affects trust. If a model makes recommendations without clear context, that affects usability. Governance influences how safe, reliable, and understandable an AI-enabled product feels.

For teams thinking seriously about AI readiness, governance must connect to UX strategy. Controls need to be visible where appropriate. Fail-safes must be intentional. Permissions and data boundaries must be clear.

An experienced UX agency does not only design interfaces. It designs systems that account for risk, transparency, and long-term scalability. As AI becomes embedded in digital products, governance becomes part of the user experience.

Emerging Governance Concepts

Several ideas are beginning to shape how organizations approach this next phase.

Agentic governance addresses a new challenge. When autonomous systems execute tasks independently, accountability becomes less obvious. Organizations need clear responsibility frameworks that define who owns outcomes when AI acts within predefined parameters.

AI Security Posture Management (AI-SPM) is another evolving layer. It focuses on identifying vulnerabilities such as prompt injection, jailbreak attempts, and data leakage in real time. As AI systems interact with external inputs, this monitoring becomes essential.

For smaller teams, there is growing interest in minimum viable governance. Not every organization needs a full enterprise compliance apparatus, but every organization needs baseline controls that protect data, clarify accountability, and reduce risk without slowing innovation.

The common thread is this: governance must scale with capability.

.png)

Shadow AI and the Internal Risk Layer

There is another dimension many companies underestimate.

Even if leadership has not formally adopted AI tools, employees already have. Marketing teams use generative AI to draft campaigns. Sales teams summarize call transcripts. Engineers experiment with coding assistants.

This shadow layer introduces real risk. Sensitive documents may be uploaded into public models. Proprietary information may be exposed. Outputs may be trusted without verification. Governance is not about restricting innovation, it’s about creating a safe path forward.

Clear guidelines, approved tools, monitoring frameworks, and structured workflows allow teams to benefit from AI without compromising integrity.

AI Governance as Infrastructure

The most forward-looking companies are not treating governance as a reaction to regulation. They are treating it as a foundational layer of digital infrastructure.

That shift mirrors how organizations matured around cybersecurity and data privacy over the past decade. What was once optional became essential. The same trajectory is unfolding for AI.

If AI is integrated into product experiences, customer journeys, and internal operations, governance must be integrated as well. It should influence architecture decisions, data storage policies, vendor selection, and interface design.

Governance does not slow AI down. It enables it to scale responsibly.

The Real Question

The real question is not whether your organization is using AI—it is whether you have structured it.

- Are there defined accountability models?

- Are outputs measured and monitored?

- Are users informed when AI is influencing decisions?

- Are there controls in place if systems behave unexpectedly?

AI readiness is not just about adoption. It is about design, structure, and trust. The companies that view governance as foundational will scale AI with confidence. The ones that treat it as an afterthought will face friction, risk, and instability.

AI governance is no longer optional. It is operational infrastructure.

If you are integrating AI into your workflows or digital products, governance should be part of the conversation from day one. As a UX agency that builds AI-ready systems, we think about structure, transparency, and scalability alongside interface design. And as a Webflow agency helping teams modernize their digital infrastructure, we ensure the systems behind the experience are as intentional as the experience itself.

If you are rethinking how AI fits into your organization, now is the time to build the foundation. We're Composite, a dedicated Webflow agency in NYC partnering with clients that are ready to future-proof their web presence. View our work or reach out to start a conversation.